Unsafe and discriminatory algorithms: ‘Regulating AI begins with experimentation’

AI, data and algorithms are developing at breakneck speed, while legislation inevitably lags behind. But laws and regulations are essential – look no further than the Dutch childcare benefits scandal. ‘To regulate, we need to experiment, but then responsibly.’

This is the central message of Anne Fleur van Veenstra’s inaugural lecture on 20 March. Van Veenstra is Professor by Special Appointment of Governance of Data and Algorithms for Urban Policy, a chair endowed by TNO in collaboration with the Leiden-Delft-Erasmus Centre for BOLD Cities. She is also Director of Science at TNO Vector and has spent years researching the societal impact of digitalisation.

‘Until the childcare benefits scandal, my field was seen as dull and abstract. But now everything is moving so fast, everyone recognises its importance. If we want to guide these developments, we have to keep experimenting. Even if you sit down with the brightest minds to devise new rules, you’ll still be too late and be playing catch-up.’

Clear rules for AI

The Dutch Data Protection Authority (DPA) agrees. Last week, it urged the new government to accelerate the implementation of AI regulation and oversight, adding that organisations wanting to adopt AI urgently need clarity. It also warned of the risks posed by unsafe and discriminatory algorithms, against which law enforcement agencies cannot take action.

For Van Veenstra, how technology influences government has been a fascinating field for years. Whether we focus on seizing the opportunities or mitigating the risks of AI, we must steer the process. In her lecture, she outlines three perspectives that help shape this approach: the innovation perspective, the values perspective and the transition perspective.

Dilemmas

‘We can’t assume we’ll achieve all three at once: that’s the ideal world. At present, these perspectives mainly cause dilemmas’, she explains. The innovation perspective means experimenting. ‘It’s about seeing whether an algorithmic or AI system can be used for a specific application to improve public services or processes.’

The values perspective is about identifying the risks of AI systems in advance, such as privacy breaches, discrimination or exclusion. Systems that fail to protect against these risks can be banned.

The transition perspective focuses on digital sovereignty and autonomy for organisations, businesses and governments. ‘Instead of being dependent on big tech.’

‘If you look at the AI Act, for example, you’ll see that it hasn’t even been implemented yet, and businesses already find it too complicated. It’s based on the values perspective, as conceived by policymakers, but from the innovation perspective, it needs to be far simpler. Regulation is caught in a tug-of-war between these three perspectives.’

So how should we go about it? According to Van Veenstra, we should experiment responsibly. ‘Give it a go and learn from the experience. We can’t ban everything, but we can’t let it run unchecked either. Instead, we should set up AI-related experiments and figure out what real government processes use and what their impact is. Once you know what those AI experiments were used for, you’ll know the risks and can draw on the insights. Ultimately, our research is relevant to any organisation wanting to use AI responsibly.’

The inaugural lecture will be streamed live on 20 March.

Talking points

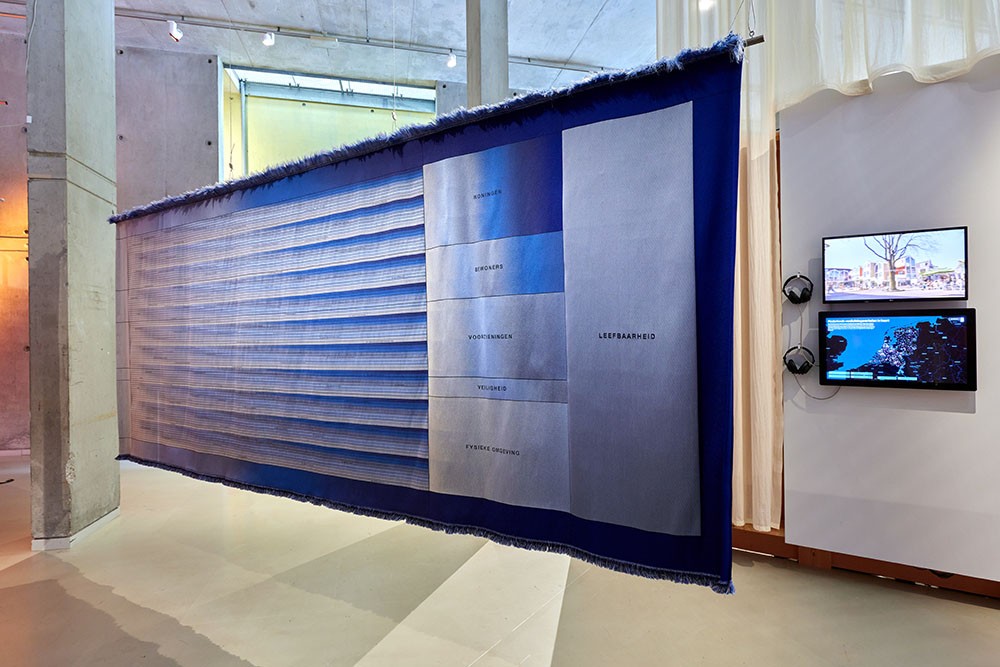

The artwork ‘Stof voor Gesprek’ (talking points) represents the ‘Leefbarometer’ project. It also depicts an algorithm used by the Municipality of Rotterdam to predict the quality of life in the city. Each box on this wall hanging, more than a hundred in total, corresponds to a data point. The relationships between these data points are determined by an algorithm that generates a result based on the data: a predictor of quality of life.