AI in education

ChatGPT and other forms of Generative AI are increasingly present in education, also at FSW. SOLO advises and informs on this topic. On this page you will find information about AI at the FSW and activities you can attend.

News

The first AI meetup of 2024 began with a brief update on the launch of the GenAI Brightspace template and faculty GenAI guidelines for students. In addition, a meeting with a group of Honors Class students who are coming to present a serious game on AI in Education as the completion of their final assignment was announced.

E-learning for students

The chief guest of this meetup was Agnesa Gashi (LLInC) who has worked with the Faculty of Humanities to develop an e-learning for students on GenAI and LLMs in the academic community. Among other things, Agnesa explained the development process, in which they used Learning Experience Design, a method widely used within LLInC. It is a seven-step process in which LLInC supported FGW in the first two steps, after which the faculty continued to develop the final product themselves.

Five chapters

The e-learning consists of five chapters, leading students through videos, text and quizzes along the basic concepts of GenAI and LLMs, explaining responsible use, placing GenAI in the context of academic integrity and delving deeper into the use of machine translation (e.g., Deepl or Google Translate).

Module for lecturers

Agnesa and her colleagues at LLInC are currently developing an e-learning module on AI aimed at lecturers. It is expected to be ready by the end of March.

From the AI meetups with lecturers and conversations with exam boards, the need for more guidelines and communication towards students on the use of Generative AI in education was evident. SOLO addressed this and developed the following for students and course coordinators:

- The faculty student guidelines "GenAI use in education @ FSW"

- A Brightspace template "GenAI use in this course" with accompanying course coordinators manual

The Brightspace template "GenAI use in this course" aims to help course coordinators 1) determine whether and what use of GenAI is allowed within the context of their own course and 2) then communicate this clearly to students via Brightspace.

A bilingual Brightspace unit "GenAI use in this course / GenAI use in this course" containing the faculty guidelines and template have been added to all 2324-S2 and 2324-HS Brightspace courses of our faculty. It is up to the course coordinator to modify the information and share it with students. Course coordinators are notified by their director of educatio.

At the AI in Education meetup on 23 November, colleagues from all four institutes were present. This provided a broad perspective on the topics discussed. Two topics were on the agenda.

Template

SOLO presented the first version of the "GenAI use in this course" template. This template is being developed as a result of discussions with faculty and exam boards. The purpose of this template:

- To provide general information and guidelines on the use of Generative AI in teaching;

- To assist course coordinators in determining the type of GenAI use that may be allowed within a specific course;

- Communicating this to students.

Initial reactions from attendees to the template were positive. The various comments will be included in a future version.

Priorities

What short-term steps are important to take now when it comes to AI in Education? The attendees were allowed to indicate what they think should be prioritized. From this came the following list (in order of priority):

| 1 | Guidelines for examination boards regarding responsible use (citation etc.) |

| 2 | Information for students about responsible use |

| 3 | Decision framework for lecturers regarding responsible (and meaningful) use in education |

| 4 | More dynamic information provision (e.g. via the newsletter) |

| 5 | More training for lecturers and students on being adaptive themselves: which sources they can keep an eye on |

| 6 | To include a statement in the OERs and R&R: "technological changes can also mean changes in testing/education" |

The October Monthly Meetup on AI in Education was an active, interesting, and informative meeting. SOLO kicked off with some announcements. We tipped off a SURF workshop "Chat-GPT: the educational implications" (in Dutch) and Npuls' theme issue Smarter Education with AI. At the request of the VCO, SOLO is going to develop a template where teachers can easily create an overview for their own course via a checklist of whether and how students are allowed to use generative AI. This idea grew out of our September discussion, where the need for clear communication to students was emphasized.

Weighted decision making

The rest of the meeting was filled by Francisca Jungslager (Educational Sciences). Using the case study of Greece's desired return of the Parthenon Marbles, she showed what Chat-GPT cannot do in her opinion, namely make a weighted and reasoned decision based on arguments. One could very well use Chat-GPT to compile a list of legal and cultural-historical arguments for and against restitution. ChatGPT could even help you come up with critical questions for those arguments. But the final weighing of those arguments and which aspect weighs more heavily can only be done by a human being. Indeed, ChatGPT refuses to answer the question.

Added value of the lecturer

Teaching critical thinking - making students think and teaching them to ask critical questions of given arguments - is the core of what we do, Jungslager argues. ChatGPT cannot replace teachers in that. And so it is important that critical thinking skills also receive extra attention in teacher professionalism.

What information about the use of AI in education is now available to students? Should there be more guidelines or rules? And how do you teach students to use AI responsibly? Lecturers and support staff pondered these questions at the second AI in Education @ FSW Meetup on 21 September. Here's a brief summary of what was discussed.

Ethical use

Developments within generative AI (GenAI) are so rapid that providing accurate information can be a challenge. But perhaps more importantly, students need to know where they stand when it comes to ethical use of AI. For example, when is it permissible to use ChatGPT, and when are you committing plagiarism? Leiden University provides information for students and teachers on its website about the (un)responsible use of AI in education. It is also stipulated in the R&R of the examination boards that improper use is considered fraud.

Learning module

A learning module in which students are guided step by step, such as the e-module Responsible use of Generative Artificial Intelligence in education of the UvA could also be an option for Leiden University. It is most effective if such a module becomes a mandatory part of the curriculum - so that you can expect every student to have the same knowledge. It is important to both keep the information on GenAI up to date and to define in which situations GenAI can and cannot be used in education.

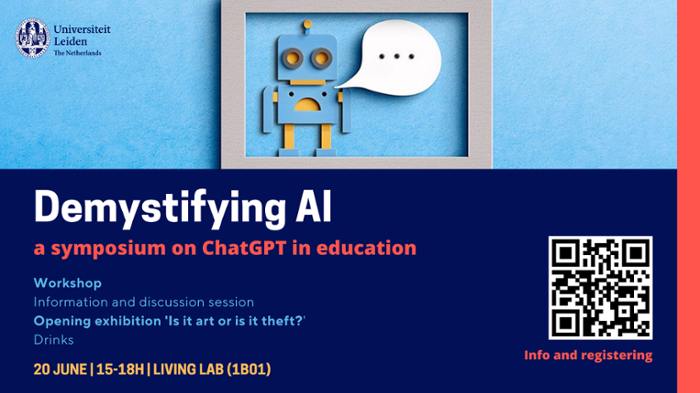

On Tuesday 20 June SOLO and LlInC hosted Demystifying AI, a symposium on the hot topic in education land: ChatGPT and other forms of AI. Burning issues were discussed, such as: when is the use of ChatGPT permissible? Does it make sense to ban ChatGPT? What does the future of education look like?

The symposium kicked off with a presentation by LLInC (check out the slides) on the basics of AI, use in education and the ethical issues involved. After this, participants engaged in a workshop and group discussion.

To conclude, there was the opening of the FSW Art Committee's new exhibition, "Is it art or is it theft? The committee presents AI-generated art and ask the question: is it still art?

Meetup AI in education @ FSW

Do you want to keep up to date with the latest developments around AI in education and exchange best practices with colleagues? In 2023-24, SOLO will organize a monthly meetup on AI in education in collaboration with LLInC for FSW teachers. Here, you will hear about the latest technological and didactical developments and be able to discuss challenges and best practices in education with fellow teachers and support staff.

GenAI in education advisory sessions

Do you want to know if your writing assignments are GenAI-proof? Or are you thinking about allowing certain GenAI use in your course and want to spar about the didactical considerations? Book a GenAI in education consultation with an education specialist from LLInC.